Contact-tracing apps haven’t made a big impact. Here’s why

Thank you for subscribing to the free edition of the twice-weekly Parallax View newsletter. All issues are free through March 22. After that, you’ll receive one issue per week. If you’d like to support our independent journalism on the intersection of health care and cybersecurity with a paid subscription, you can do so here. If you'd like a subscription option not available, please email me: seth@the-parallax.com.

As Covid-19 ravaged countries across the world last April, aided in part by the challenges countries faced in manually contact-tracing a highly contagious respiratory virus, tech rivals Apple and Google made a rare joint technology announcement: Silicon Valley was here to help. They had created a Covid-19 contact-tracing backend for iPhones and Androids that would help identify when people infected with the novel coronavirus had been in physical proximity to people who were not known to be infected.

Their exposure notification system raised many questions. Would countries build apps using Big Tech’s exposure notification system? If they did, would enough people download them? (The University of Oxford concluded that 60 percent of a population would need to use a contact-tracing app in order for it to be effective.) And even if enough people used them, would they be as or more effective than traditional manual contact-tracing efforts?

To help build public trust, Apple and Google said their new back-end technology, built into their smartphone operating systems, would be respectful of consumer and patient privacy. When the Silicon Valley giants updated their smartphone operating systems with the technology in May, the future was murky for digital contract tracing. Ten months later, 38 countries and 26 of the states and territories of the United States have built digital contact-tracing smartphone apps that rely on their exposure notification system, with 11 additional countries (and two additional U.S. states) using alternative technology.

"It’s very hard to explain technology that’s very new and novel. A more dramatic path in the beginning could have endangered the entire effort." —Ali Lange, public-policy manager, Google

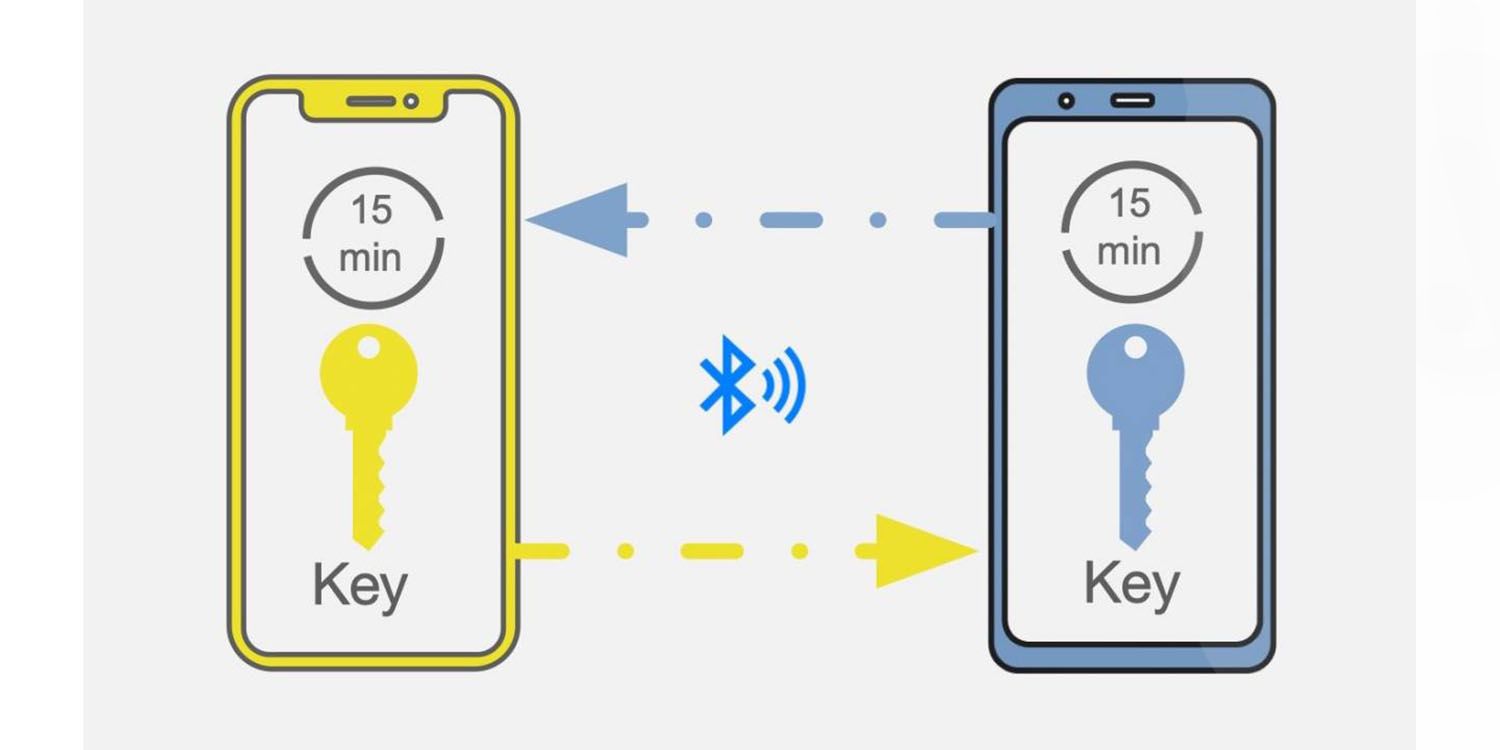

The basic premise of the Apple-Google digital contact-tracing technology is that the smartphone owner tells the app on their phone if and when they have been diagnosed with Covid-19. The app, which is opt-in, depends on automatic Bluetooth interactions between smartphones to pseudonymously identify an uninfected person who has been in close physical proximity to an infected patient.

Apple and Google spent “hundreds of hours” in the early days of the pandemic to develop their exposure notification system, said Ali Lange, a public-policy manager at Google who worked on the technology. She spoke about the competing policy concerns while working on the app at the cybersecurity and privacy conference Enigma on February 2.

There’s much more to its success than just getting people to install and activate the app.

“It’s not just having the app on your phone; it’s every other decision you make with your phone,” she said. “Trust was essential not just for people to participate, but [to] participate faithfully. The challenges communicating about how this technology works—it’s very hard to explain technology that’s very new and novel. A more dramatic path in the beginning could have endangered the entire effort,” such as forcibly installing the app on smartphones.

Those questions and others dogged the entire development process, Lange said, and persist to this day. Developing a system that would be effective at tracing and notifying people when they’ve been exposed to Covid-19, and at encouraging them to quarantine or seek medical attention, were challenges from the start of the effort. So was establishing trust in a new collaboration between public health and Big Tech, when that trust has been declining.

There were no easy answers. Updating the historically successful practice of manual contact tracing for the Digital Age in the middle of a global pandemic is a challenge that even Big Tech struggles to master.

For digital contact tracing to be effective, making sure that the right kinds of personal data are shared at the right time with the right people is a swirling, seemingly chaotic mix of technology, policy, privacy, and public-health interests. Developers must contend with the nuances of a user’s health status and geographic location, the smartphone’s unique identifiers, sufficient protection against surveillance, and data retention length.

Researchers at Citizen Lab published a report in December on the medically unnecessary data that contact-tracing apps in Indonesia and the Philippines collect on users there, and found that that information can be used with relative ease to identify individuals.

Developers must also contend with privacy concerns, from specific regulatory stipulations that change on a per-country (and per-state) basis, to the more nebulous concept of “trust.” Patients need to be able to trust that the data they share with a contact-tracing app will be used only for its intended purpose—to help identify people who might have Covid-19 before they’ve been diagnosed with it, and to get them diagnosed and treated as early as possible—and not for government surveillance, Big Tech data mining, or targeted advertising.

At least one new study indicates that the Apple-Google technology does help prevent infection. England and Wales introduced a new National Health Service exposure notification app based on the Apple-Google protocol on September 24. Millions of people installed it on their phones in its first few weeks. (By contrast, adoption rates in the United States have been pitiful, according to an Associated Press report in December.)

"How do we further optimize the needs of public health and targeted intervention? Is there a way we get towards giving more data for public health without sacrificing security or privacy?" —Mike Judd, Covid-19 Exposure Notification Initiative, Center for Disease Control

During the ensuing months, from October 1 to December 31, 1.9 million people in England and Wales were newly infected with Covid-19. But between 200,000 and 900,000 more people would have been infected, if not for alerts from the app, according to a study by the University of Oxford and Alan Turing Institute published February 8.

“We all want to stop the pandemic as soon as possible,” said panelist Tiffany C. Li, a technology law professor at the Boston University School of Law. “The issue is, I want to be able to participate in these great public-health efforts without giving up my privacy.”

Current rules and regulations are just not up to the “interdisciplinary” tasks that contact-tracing app developers face, she said, noting that Covid-19 won’t be the last public-health crisis requiring multiple stakeholders, often with competing interests, to work together to protect people.

“We do need to utilize data more,” she said, but we also “need protections. One way to do that is changing or creating our current laws to protect privacy. Making sure that data won’t later be used against them.”

Her concerns were echoed by co-panelist Mike Judd, who leads the Center for Disease Control’s Covid-19 Exposure Notification Initiative. Privacy advocates made an important impact on the development of contact-tracing technology, he said.

The apparent reluctance among Big Tech, the federal government, and global institutions thus far to hoover up vast amounts of patient data via contact-tracing apps is “remarkable,” Judd said. “How do we further optimize the needs of public health and targeted intervention? Is there a way we get towards giving more data for public health without sacrificing security or privacy?”

In addition to sorting out privacy and data collection concerns, the United States faces a massive hurdle in getting all its state and territory apps to communicate with one another so that when people travel between states, they can receive timely notifications if they’ve encountered an infected patient.

“The CDC’s role is to support state, local, territorial, and tribal health departments, and not to be the central authority telling the states what to do,” Judd said, though that wouldn’t prevent the federal government from creating a national key server, which is the heart of the Apple-Google exposure notification technology.

It’s a problem in Europe too. Switzerland, which is not part of the European Union, was not able to take advantage of a technology hub in the EU for building apps that would be able to communicate with one another when people from different countries meet, said Marcel Salathé, an associate professor at the Swiss Federal Institute of Technology.

“At the end of the day, interoperability is technically feasible, but serious roadblocks exist on the political side of things,” said Salathé, who leads the digital epidemiology lab in Geneva. “The virus reminds us on a daily basis that it doesn’t give a damn about nations and borders.”

A Tweet to live by:

BTW, it's SUPER exciting to me that top peer-reviewed medical journals are beginning to publish work co-authored by security researchers.

— Andrea Ruth Coravos (@AndreaCoravos) February 23, 2021

When software eats healthcare, doctors and hackers need to build hand in hand. 🏥👾 https://t.co/9r16R7A0pV

What do you know that I don't?

Got a tip? Know somebody who does? You can reach me via email, Twitter DM, or Signal secure text: 415-730-3194.

Coming next on Tuesday:

Some of you may know that CISA played a critical role in securing the 2020 elections, but its mandate is much broader than that. The relatively new agency is now working on securing vaccines. What do vaccines have to do with cybersecurity? CISA's chief strategist for Covid response, Josh Corman, explains why the vaccine supply chain is a cybersecurity problem.

Thank you for subscribing to the free edition of The Parallax View! Learn more about our paid subscription options here.