Hackers call for federal funding, regulation of software security

LAS VEGAS—When hackers find a security hole in an app you’re using, should the company distributing it be responsible for patching it? What consequences should it face for leaving it unpatched? And what consequences might you face for continuing to use it unpatched?

Funding and liability for software security took center stage at the annual Black Hat and Defcon hacker conferences held here last week, starting with renowned security researcher Dan Kaminsky telling a filled-to-capacity room of more than 6,000 hackers, executives, and other security professionals the first day of Black Hat that it is time for an “NIH for cyber.”

Best known for finding a critical flaw in the Domain Name System, the Internet’s de facto address book, and more recently for working to end “clickjacking” attacks, Kaminsky is calling for the establishment of a federal agency akin to the National Institutes of Health to fund security research, and help keep the Internet safe for consumers and businesses.

“We need a bunch of nerds able to do down-and-dirty engineering for the good of the Internet,” Kaminsky said in an interview with The Parallax after his keynote. He hopes that his call for more and smarter investing by the U.S. government into Internet and computer security will head off what some experts see as “inevitable”: lethal and severely injurious cyberattacks.

“We will see some known—publicly known—loss of life due to security attacks,” Katie Moussouris, founder and CEO of Luta Security, said at the CodenomiCon pre-Black Hat panel held the night before Kaminsky’s keynote speech. “I think it’s inevitable, and it is coming way sooner than anyone can anticipate or prevent at this point. We don’t have security solved for the technology that’s been deployed for the last 30 years, let alone the new technology,” she said, including many Internet of Things devices.

“It’s pretty clear that the market has failed to deliver the level of cybersecurity that we need as a nation.” — Herb Lin, security policy expert, Stanford University

A fear that organizations or individuals could manipulate software to kill people has moved from the realm of dystopian fiction to real calls by security specialists to enforce efficient patching of vulnerabilities in Internet-connected devices ranging from cars to pacemakers. Car hacking grabbed headlines last year, as a Wired reporter allowed himself to hurtle down the freeway at 70 mph in a Jeep Cherokee while hackers disabled the brakes, a maneuver that led Chrysler to recall 1.4 million vehicles.

Malicious software sneaks through unpatched security holes to deliver its payload. That’s true for yesterday’s malware, for today’s ransomware and, as Moussouris said, attempting to coin a term, ultimately for “killware,” a type of “malware specifically designed to hurt people, to end life.”

Security researchers’ quests for government intervention begin to make sense when one considers the predicted lethal ramifications of leaving a hole unpatched, as well as the scant few rules currently guiding how, why, and when manufacturers should patch their software. Researchers don’t have the power to force vendors to patch their products, they say, but government agencies armed with the legal authorities to fine, rule against, or shut them down would.

Making vendors legally liable for flaws in their software is a huge yet critical task, says Herb Lin, a security policy expert at Stanford University, not unlike instituting and enforcing construction regulations.

“It’s pretty clear that the market has failed to deliver the level of cybersecurity that we need as a nation. If you allow liability, you treat software just like any other product or service,” he says. “But the software industry would hate that.”

Software vendor advocacy organizations, including the Information Technology Industry Council and the Software and Information Industry Association, did not return repeated requests for comment on this story.

Their longstanding argument against security regulation goes something like this: If software makers were liable for vulnerabilities in their software, they’d need to reallocate resources from research and development to legal efforts, as well as raise their prices. Furthermore, as product liability attorney Dana Taschner told Newsweek in 2009, they assert that software is not a “product” and therefore is not covered by product liability laws.

Four years before Taschner made those remarks, Howard Schmidt, former NSA cybersecurity adviser and longtime security software industry executive, said regulation enforcing vendor liability for security flaws would decimate the industry.

“I always have been, and continue to be, against any sort of liability actions, as long as we continue to see market forces improve software,” Schmidt wrote in a 2005 column for CNET. “Unfortunately, introducing vendor liability to solve security flaws hurts everybody, including employees, shareholders, and customers, because it raises costs and stifles innovation.”

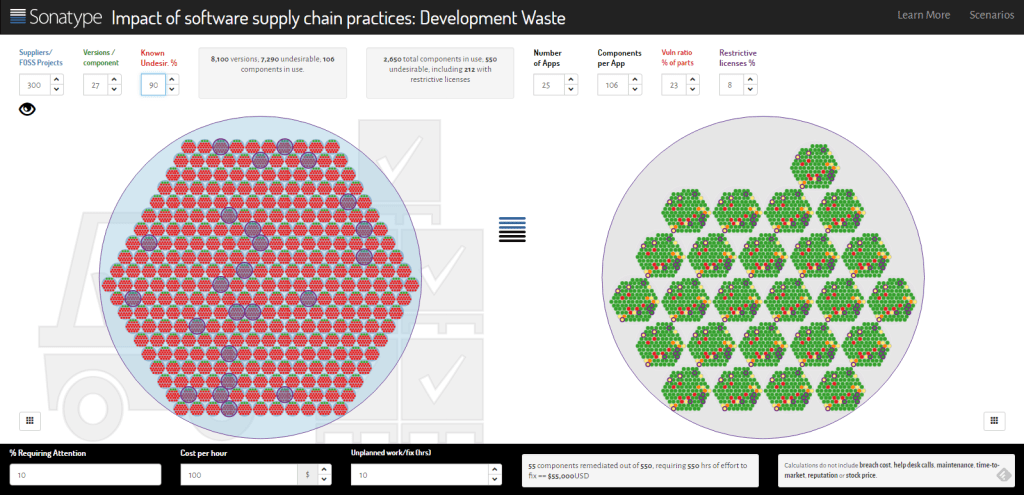

Sonatype’s software development waste calculator. Screenshot by Seth Rosenblatt/The Parallax

A growing appetite for regulation

Advocates of regulation say that argument is antiquated, in part, because today’s proprietary software is rarely developed entirely in-house. According to a report on the software supply chain by software development company Sonatype, download requests from open-source software distribution repositories increased from about 500 million in 2007 to 17.2 billion in 2015, spanning 105,000 projects and more than 1 million software versions.

In the report’s analysis of more than 1,500 software programs, it found that “a typical application has 24 known severe or critical security vulnerabilities and nine restrictive licenses” by the time it’s released to consumers. A restrictive license in open-source software can legally prevent developers from making changes or improvements to the software, even to make it safer.

The report also cites a study by Josh Corman, who recently transitioned from chief technology officer at Sonatype to director of the Cyber Statecraft Initiative at the Atlantic Council, and Dan Geer, chief information security officer of the CIA-affiliated venture capital fund In-Q-Tel, detailing technology companies’ growing reliance on open-source software libraries—and hackers’ increasing interest in vulnerabilities present in those libraries. It includes several examples of security vulnerabilities shipping in open-source code after a patch has been released.

“Software doesn’t age like fine wine; it ages like milk. Software goes bad over time.” — Josh Corman, director, Cyber Statecraft Initiative, Atlantic Council

Sonatype is not the first software evaluation company to tie vulnerabilities in proprietary software to separately developed code. In 2014, Veracode tested 5,300 applications, and found that open-source and third-party components introduced “an average of 24 known vulnerabilities into each Web application. Many of these vulnerabilities expose enterprises to significant cyberthreats such as data breaches, malware injections, and denial-of-service attacks,” the company said in a statement.

The companies assembling code from these repositories, and ultimately selling or giving away proprietary software as apps, operating systems, or device firmware should be held liable for their safety to consumers, says Corman, who co-founded an initiative called I am the Cavalry to push government agencies and corporations to take a more active role in safeguarding consumers.

“Software doesn’t age like fine wine; it ages like milk. Software goes bad over time,” Corman says. “If we knew the [software] supply chain vulnerabilities, and how to patch and identify them, how much of the [hospital] ransomware payouts could have been avoided? People almost died because of that.”

Corman also compares software makers with car manufacturers.

“We don’t have voluntary minimum safety standards for cars; we have a mandatory minimum,” Corman says. “What tips the equation [for software] is the Internet of Things, because we now have bits and bytes meeting flesh and blood.”

He points to the efforts of Ralph Nader and others to force carmaker titans in Detroit, Tokyo, and Frankfurt to adopt a safety feature we take for granted today: the seat belt.

“When Ralph Nader [wrote] Unsafe at Any Speed, carmakers said that seat belts would kill the car industry,” Corman said. “But the opposite happened, and they now compete on safety features.”

Robert A. Lutz, who was a top executive at BMW, Ford Motor, Chrysler, and General Motors conceded that point in an interview last year with The New York Times on the book’s 50th anniversary. “I don’t like Ralph Nader, and I didn’t like the book, but there was definitely a role for government in automotive safety.”

At least one major software vendor, Microsoft, has called for the software industry to establish standards by which it can be held liable. Although the company hasn’t explicitly called for regulation, it said in a June 2016 report that technology companies “must agree to norms that enhance trust” in their products.

“Companies must be clear that they will neither permit backdoors in products nor withhold patches, either of which would leave technology users exposed,” Microsoft said in the report.

Moussouris, citing initiatives such as Peiter and Sarah Zatko’s Cyber Independent Testing Lab, acknowledges a growing appetite for better self-policing and government regulation of software security.

“We’ve already seen some early events like this, where companies have been prosecuted for false advertising in their security claims,” she says. “We’re seeing FTC, FDA, DoD—a lot of the regulatory bodies, as well as main government arms—get really serious about enforcing cybersecurity.”

What regulation might look like

Lin says rules imposed either by a government agency or Congress are needed to ensure that software makers address code vulnerabilities in a timely manner.

“Drastic regulation—more radical than we’ve seen in the past—will have to play some role,” he says, because the courts have almost never sided with consumers over software violations.

Companies should be held liable for problems that arise from distributing software with known vulnerabilities, Corman says, such as Android 4.3 two years ago. At the time, Google’s refusal to fix a critical WebView flaw put nearly 1 billion Android users at risk. The WebView flaw, which gave hackers access to the deepest parts of the Android operating system, could be used to steal data from or take control of a device running it.

Regulation should begin, Corman says, with what he calls “software supply chain transparency.” Software companies should offer consumers a “list of ingredients,” he says, delineating the components and libraries they used. The should also list any known vulnerabilities and patches, and provide a simple, automated way to receive and install updates.

“More people have been killed crashing through windows than Windows crashing.” — Dan Kaminsky, security researcher

Corman isn’t the only one advocating for better transparency. The American Bankers Association, in conjunction with the Federal Services Sector Coordinating Council, the UL Cybersecurity Assurance Program, the Financial Services Information Sharing and Analysis Center, Mayo Clinic, Philips Healthcare, and an FDA workshop on health care have all pushed for rules and regulations nearly identical to those Corman proposes.

Congress has taken a stab too. In December 2014, Rep. Ed Royce (R-Calif.) proposed the Cyber Supply Chain Management and Transparency Act, co-sponsored by Rep. Lynn Jenkins (R-Kan.), seeking to enforce similar policies.

Recommendations from the UL Cybersecurity Assurance program, which are more extensive than Corman’s, advocate that software companies run three kinds of tests before shipping to consumers: static code analysis, which examines the software code without running the program; fuzzing, which tests how software responds to large inputs of random data intended to crash it; and penetration testing, which is a more dynamic, real-world test of how software handles attempts by skilled hackers to crack its code.

“Nobody should hold you responsible for a zero-day” vulnerability, a previously unknown and unpatched security hole, Corman says. But if you’re using code sourced from “a 10-year-old, vulnerable library that has had a patch for the past seven years,” he adds, you should be held at least partially liable for the actions of hackers who exploit the resulting security flaws.

The bill from Reps. Royce and Jenkins died shortly after its introduction, at the end of the Congressional session. However, Corman says, it was a step forward in a movement to help consumers better evaluate their software choices and delay the doomsday “killware” prediction.

Kaminsky, acknowledging that “more people have been killed crashing through windows than Windows crashing,” says the software industry is at a point at which it needs to work toward better safety controls.

“I don’t want someone to die for us to fix this clear-and-present threat to the existing economic health of the country,” he says. “I don’t need one dead body to deal with billions and billions of dollars in damage. It’s OK. Nobody needs to die. We can start fixing it today.”

Correction: An earlier version of this story misspelled the last name of the developers of the Cyber Independent Testing Lab. It is Zatko.